While one of our favorite ways to gather customer feedback focuses on active listening during one-on-one sessions with customers, customer satisfaction surveys provide an opportunity to poll users on questions that might otherwise go unanswered.

But here’s the thing: Customer satisfaction surveys are only valuable if you ask the right questions, in the best way, at the perfect time. That’s why building and deploying an effective and valuable customer satisfaction survey is no small feat.

Today, we’ll look at some proven ways to turn your surveys into a reliable source of insightful customer information.

Why customer satisfaction surveys are important

Let’s first talk about why customer satisfaction surveys matter for today’s businesses.

Customer satisfaction is one of the few levers brands can still pull to differentiate themselves in crowded and competitive marketplaces. Today, the brand with the best customer experience usually wins.

That’s because, as Qualtrics put it, “High levels of customer satisfaction … are strong predictors of customer and client retention, loyalty, and product repurchase.”

Not to mention, poor customer satisfaction can actively harm your brand. The average American consumer will tell 16 other people about poor customer experiences, and it takes brands an average of 12 positive experiences to make up for one unresolved negative experience.

In other words, the stakes are high when it comes to customer satisfaction and experiences today, and customer satisfaction (or CSAT) surveys are one of the most effective ways for your brand to keep a pulse on how customers are feeling.

When you have access to the data customer satisfaction surveys provide, you can actually take action to improve your customer satisfaction and get proactive about the problems customers face.

That means you can turn negative customer experiences around and improve your overall product and service to delight more customers — leading to better loyalty and retention, higher sales, and less churn overall.

10 customer satisfaction survey best practices

The efficacy of your customer satisfaction data relies on getting honest and accurate answers from your customers. So it’s no surprise that most of the problems we see with customer satisfaction surveys revolve around getting accurate answers from respondents:

No matter what you do, research has shown that there will always be a small minority of people who will lie on your survey, especially when the questions pertain to the three Bs: behavior, beliefs or belonging. (Here’s a review of this topic from Cornell University.)

Furthermore, sometimes people will give inaccurate answers completely by accident. Numerous publications have noted that predicting future intentions can be quite difficult (like whether or not they’ll buy from you again), especially when done via survey.

Fortunately, research also offers solutions to these consistent problems with surveys. A joint study by Survey Monkey and the Gallup Group offers some good insights on creating and structuring surveys that can keep these problems to a minimum.

Below, let’s look at the study’s most important takeaways so you can get a clear picture of how to improve your surveys.

1. Keep it short

Your main goal is to be clear and concise, finding the shortest way to ask a question without muddying its intent. It’s not just about reducing the character count — you also need to cut out unnecessary phrasing from your questions.

At the same time, overall survey length remains important for keeping abandonment rates low. Think about the last time you sat around and excitedly answered a 30-minute questionnaire. It’s probably never happened.

2. Only ask questions that fulfill your end goal

In short, be ruthless when it comes to cutting unnecessary questions from your surveys.

Every question you include should have a well-defined purpose and a strong case for being included. Otherwise, send it to the chopping block.

For example, depending on the survey’s purpose, it may not matter how a customer first came in contact with your site. If that’s the case, don’t ask how they found out about you. Do you really need to know a customer’s name? If not, again, don’t ask.

Including questions you thought “couldn’t hurt to ask” only adds unnecessary length to your survey — length that could send survey respondents hunting for the “back” button.

3. Construct smart, open-ended questions

Although it’s tempting to stick with multiple-choice queries and scales, some of your most insightful feedback will come from open-ended questions that allow customers to spill their real thoughts onto the page.

However, nothing makes a survey more intimidating than a huge text box connected to the very first question. It’s best to ask the brief questions first and create a sense of progress. Then give survey takers who’ve made it to the closing questions the opportunity to elaborate on their thoughts.

One strategy is to get people to commit to a question with a simple introduction, and then follow up with an open-ended question such as, “Why do you feel this way?”

4. Ask one question at a time

We’ve all been hit with an extensive series of questions before: “How did you find our site? Do you understand what our product does? Why or why not?”

It can begin to feel like you’re being interrogated by someone who won’t let you finish your sentences. If you want quality responses, you need to give people time to think through each individual question.

Bombarding people with multiple questions at once leads to half-hearted answers by respondents who are just looking to get through to the end — if they don’t abandon you before then. Instead, make things easy by sticking to one main point at a time.

5. Make rating scales consistent

Common scales used for surveys can become cumbersome and confusing when the context begins to change.

Here’s an example: While answering a survey’s initial questions, you are told to respond by choosing between 1-5, where 1 = “Strongly Disagree” and 5 = “Strongly Agree.”

Later in the survey, however, you are asked to evaluate the importance of certain items. The problem: Now 1 is assigned as “Most Important,” but you had been using 5 as the agreeable answer to every previous question. That’s incredibly confusing. How many people missed this change and gave inaccurate answers completely by accident?

6. Avoid leading and loaded questions

Questions that lead respondents toward a certain answer due to biased phrasing won’t get you valuable or accurate feedback. SurveyMonkey offers a great example of a leading question to avoid:

“We have recently upgraded SurveyMonkey’s features to become a first-class tool. What are your thoughts on the new site?”

This is a clear case of letting pride in your product get in the way of asking a good question. Instead, the more neutral, “What do you think of the recent SurveyMonkey upgrades?” is a better option.

When the goal is to honestly learn something, don’t risk annoying your participants (and muddying your data) with leading questions or other tactics designed to get the responses you want to see.

7. Make use of yes/no questions

When you’re asking a question that has a simple outcome, try to frame the question as a yes/no option.

The Survey Monkey study showed that these closed-ended questions make for great starter questions because they’re typically easier for customers to evaluate and complete. For example:

“Did our support team make you feel valued as a customer?”

The response to this question doesn't require a scale — "highly valued," "valued," "not valued," etc. — a simple yes-or-no option is simpler for the customer and should give you all the information you need. Plus, you can follow it with an open-ended question, such as:

"What did our team do to make you feel valued?"

8. Get specific and avoid assumptions

When you create questions that assume a customer is knowledgeable about something, you’re likely going to run into problems (unless you are surveying a very targeted subset of people).

One big culprit is the language and terminology you use in questions, which is why we recommend staying away from industry acronyms, buzzwords and jargon, or references.

Similarly, one of the worst assumptions you can make is to assume people will answer with specific examples or explain their reasoning. It’s better to ask them to be specific and let them know you welcome this sort of feedback:

“How do you feel about [blank]? Feel free to get specific; we love detailed feedback!”

9. Think about your timing

Interestingly, the Survey Monkey study we referenced above found the highest survey open and click-through rates occurred on Monday, Friday, and Sunday, respectively.

There was no discernible difference between the response quality gathered on weekdays versus weekends, either, so your best bet is to seek out survey-takers first thing during a new week or to wait for the weekend.

Many companies conduct customer surveys once a year, or at most, once per quarter. And while that’s great, it’s not enough to keep a real pulse on customer satisfaction — you don’t want to wait 90 days to find out your customer is unhappy.

Between full surveys, you’ll want to keep a keen eye on your customer satisfaction ratings and other metrics. Reporting tools (such as Help Scout reports) can help you turn every conversation with a customer into a feedback session.

10. Offer survey respondents a bonus

In some cases, it makes sense to entice customers to take your survey: A variety of data show that incentives can increase survey response rates. These incentives could be a discount, a giveaway, or an account credit.

The key here is to find a balance between incentivizing customers enough that they’re willing to take the survey without giving away the farm. Your incentives need to be something your brand can financially handle — which is why we often recommend credits or free trials in lieu of unrelated gifts or extensive discounts.

Now, you may worry that offering survey respondents a freebie may detract from the quality of your responses. But studies show that likely isn’t the case.

Customer satisfaction survey examples

Now that we’ve talked about best practices for customer satisfaction surveys, let’s take a look at how those look in the wild. Here are a few examples of brands doing customer satisfaction surveys right, highlighting what makes each example great.

In-app examples

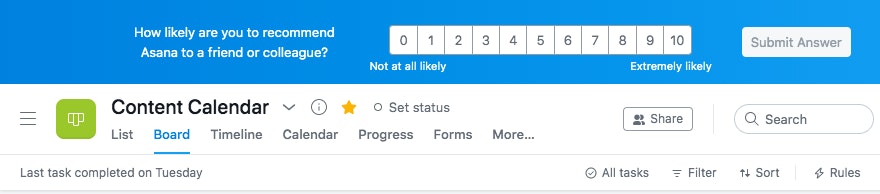

The project management tool Asana makes a point of gathering feedback on customer satisfaction regularly throughout its software. Occasionally, when working in the tool, a survey will appear at the top of the screen asking how likely you are to recommend the tool:

Asking for feedback while someone is actively using your product is great because they're engaged with the product and their experience with the product is fresh in their minds.

Of course, Asana knows that busy business professionals don’t have time to complete a lengthy survey while they're in the middle of working, so they keep it short and simple. Customers only have to click a rating to provide their feedback. After clicking the rating, customers are provided with an opportunity to submit written feedback if desired.

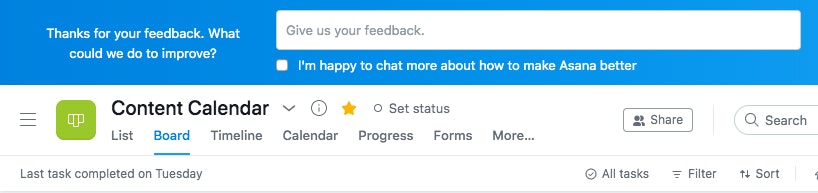

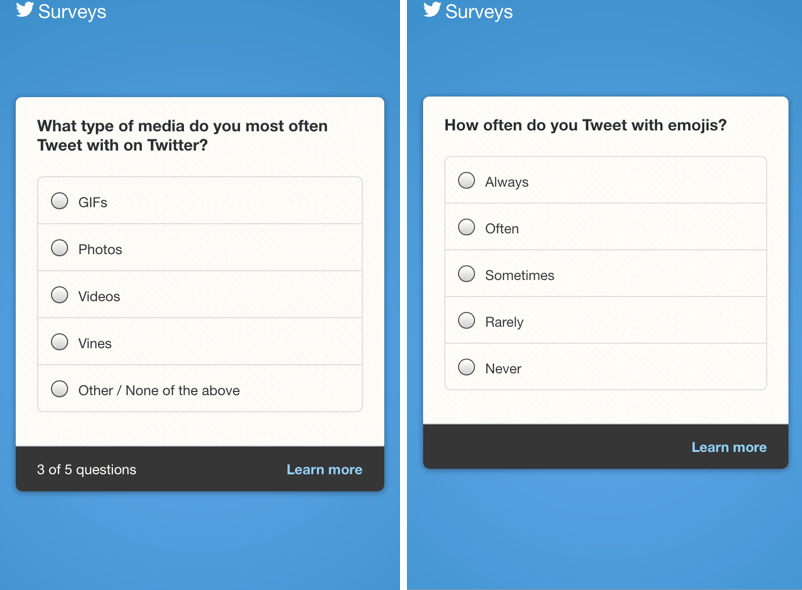

Another great example is Twitter. Twitter makes a point to regularly survey users about all manner of product usage and satisfaction, and their surveys get several things really right.

For one, the copy that invites users to take part in the survey appears right on their Twitter timeline.

The copy also does a great job of setting expectations — it promises “a few quick questions,” and that’s exactly what the survey delivers. Twitter keeps their questions (and the survey as a whole) brief, showing users a progress bar so they always know where they stand within the survey.

Plus, the experience of going from tweet to survey and back to the user’s timeline is seamless and natural.

Email examples

This email survey from Amazon is a great way to gather specific feedback that helps other customers when shopping on the platform. Instead of asking the customer to write a review, it simply asks about the fit of a recently purchased piece of clothing.

Some people simply will not take the time to write a detailed review of a purchase, but with this simple, targeted, one-question survey, Amazon can gather the feedback it needs to make sure future purchasers of this item know which size they should buy to be satisfied with their purchase.

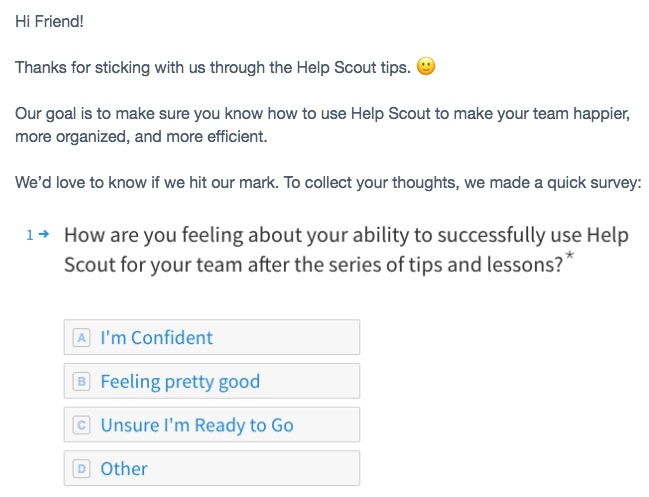

At Help Scout, we regularly check in with customers to gauge their satisfaction with our software and support. At times, we've sent new customers this onboarding email to find out how they're feeling about their ability to use Help Scout:

If a customer clicks on the survey question, they're taken to a succinct, five-question Typeform survey with only one open-ended question to save customers' time.

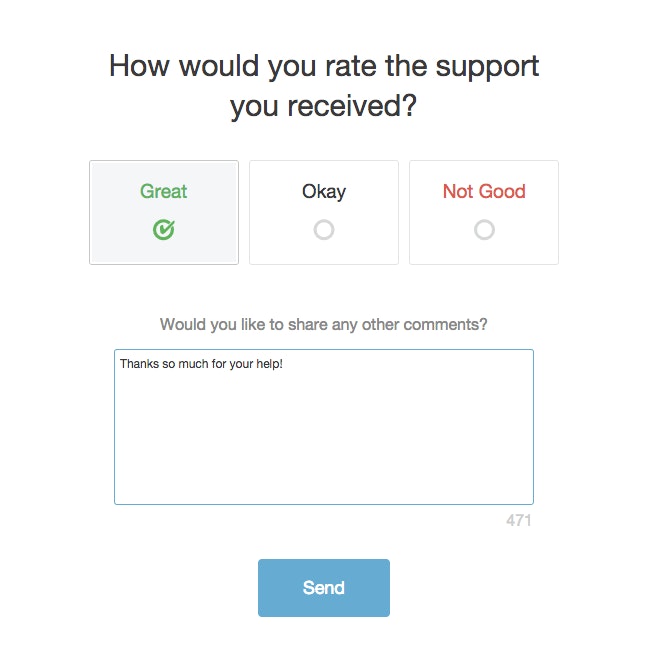

We also make it easy for customers to provide feedback after every interaction with our Customers team with a brief two-question survey:

Sample customer satisfaction survey questions

Now, we know it can be difficult to come up with customer satisfaction survey questions that tick all of the best practice boxes we mentioned before. So to make things a little easier on you, we’ve pulled together 19 sample questions, based on common customer satisfaction survey questions. You can pull these questions directly and copy/paste them into your own surveys, or tweak them as you see fit.

General survey questions

In your own words, describe how you feel about [brand or product].

How can we improve your experience with the company?

What's working for you and why?

What can our employees do better?

Do you have any additional comments or feedback for us?

Product and usage questions

How often do you use the product or service?

Does the product help you achieve your goals?

What is your favorite tool or feature?

What would you improve or add if you could?

Which of the following words would you use to describe our product?

If you could change one thing about our product, what would it be?

Customer support questions

How would you rate the quality of your customer support experience?

Did our support team completely resolve your issue?

How long did it take us to resolve your problem?

Did our support team make you feel valued as a customer?

How easy did we make it to handle your issue?

Loyalty and retention questions

How likely are you to buy again from us?

How likely are you to recommend our company to a friend or colleague?

What would you say to someone who asked about us?

Get valuable feedback with a customer satisfaction survey

Customer satisfaction surveys are a potent and valuable tool in your brand’s fight to win customer hearts and loyalty. With the feedback they provide, you can improve your product, your service, and the overall customer experience — leading to higher revenue and more loyal customers.

If you’re ready to build your survey right now, here are a few high-quality customer satisfaction survey templates we recommend:

A simple and comprehensive customer satisfaction survey template from Survey Monkey

A short and sweet customer survey template from Microsoft Office

A dynamic and customizable customer satisfaction survey template from Typeform

A short and simple customer survey template from JotForm