Many SaaS companies aim to be customer-centric and put their customers at the heart of their businesses. But you can’t do that if you don’t have a strong understanding of what your customers want and need from your product.

That’s where user feedback comes in. It’s the dotted line that joins up product development with your customers’ needs to ensure that what you’re building is actually what customers want.

But gathering user feedback and using it effectively isn’t easy. It takes time and effort to collect, manage, and act on feedback from your customers.

This is an introductory guide to collecting and managing user feedback at SaaS companies. It will help you understand why it’s important to collect user feedback, give you actionable tools for collecting it, and provide you with inspiration to ask the right questions at the right time.

What is user feedback?

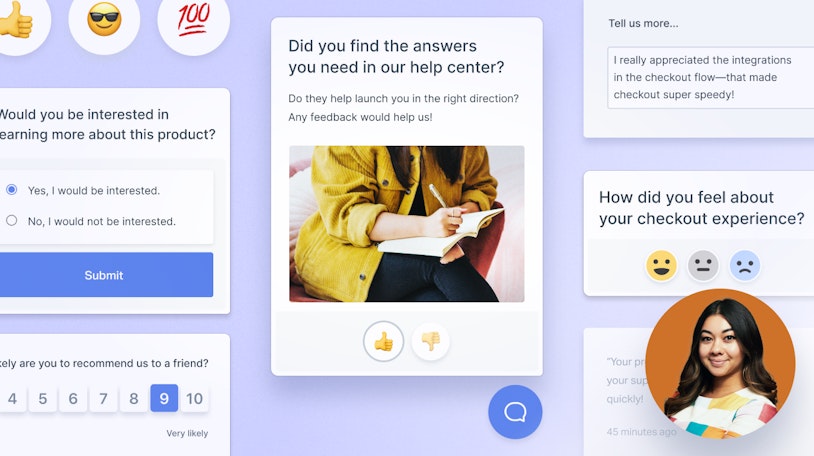

User feedback is information collected from your users and customers on their likes, dislikes, impressions, and requests relating to your SaaS product or service. There are several ways to collect user feedback, including in-product surveys, live chat, and direct customer interviews.

Why is collecting user feedback important?

Collecting and analyzing user feedback helps your company make improvements to your product based on what your users need. It helps you understand if your customers are happy and what they need from your product in order to keep using it.Collecting user feedback benefits all areas of your business:

Shaping your product roadmap: It gives you real data to guide your decision-making around adding or prioritizing new features.

Guiding your sales team: It gives a real understanding of where your customers get value from your product.

Influencing your marketing content: User feedback can become testimonials for your website, be used as case studies, and help align your marketing message with the value your product delivers.

When to collect user feedback

When collecting feedback from your users, timing is everything. You need to ask for feedback at the right time and at the right intervals. Ask too often, and you’ll annoy your users. Ask at the wrong time, and you won’t get the insights you need.

Here’s an overview of some of the most important times to collect feedback from your users:

Immediately after sign-up: This is a great time to get feedback on your sign-up process. How straightforward was it? Was there anything that was confusing or unclear? Asking for feedback immediately after sign-up helps you understand how you can improve this experience for new customers.

A couple of weeks after sign-up: This is the perfect time to get a feel for how your newest users are getting on with your product. Are they getting value from it? Are there any areas they’re struggling with? How easy is it for them to do what they need to with your product?

When a user converts from a free trial to a paid account: This will help you understand their motivations for upgrading. You can use these insights to understand where your users are getting the most value from your product, as well as areas of friction to help you improve your trial-to-paid conversion rates.

After a support interaction: For many SaaS companies, the service and support experience you provide can be a real differentiator. Asking open-ended questions about a user’s experience can help you understand how you can improve your support as well as identify trends and common themes that may point to bigger problems within your product.

When a user downgrades their account: This is best suited to SaaS products with a self-service model where users can upgrade or downgrade without speaking to a sales person (otherwise you could just ask them on the call). When a customer downgrades their account, follow up with a short survey to ask why they’re downgrading to identify common themes and potential areas for improvement.

When a customer cancels their account: Are they switching to a competitor? Are they simply not using your product? Was there a specific feature or functionality that was missing, meaning they’ve had to look for an alternative solution? Surveying customers once they’ve cancelled can provide valuable insights to help you retain more customers in the future.

When a customer renews their subscription: Equally, if they’ve been with you for a year and decide to renew their subscription for another 12 months, this is a great time to ask for feedback. You want to understand how happy they are with your product, as well as identify areas for improvement.

How to collect user feedback

There are a number of different types of tools you can use to collect user feedback. Below, we've provided five different types of user feedback tools, a few specific tool suggestions, and details on when it's appropriate to use each type of tool.

1. Net Promoter Score (NPS)

NPS tools are dedicated tools for conducting Net Promoter Score surveys. NPS measures customer loyalty and satisfaction by asking users how likely they are to recommend your product to others on a scale from 0–10.

You can also follow up on this rating question by asking them to share their experiences and opinions in their own words. This gives you more detail and can be a fantastic source of insight.

For example, feedback from your NPS survey can guide your product roadmap or be used as testimonials (as long as you have the user’s permission).

When to use NPS tools:

A couple of weeks after sign-up.

After a customer renews their subscription.

Examples of NPS tools:

2. Customer Satisfaction (CSAT)

CSAT tools are dedicated tools for carrying out customer satisfaction surveys. A customer satisfaction survey is a simple way to ask your customers about their recent experience with your product or service.

You can send CSAT surveys to understand how happy users are with something you’ve done (like providing support), or certain aspects of your products/services (like your onboarding process).

When to use CSAT tools:

After a support interaction.

Examples of CSAT tools:

3. In-app feedback tools

These tools help you collect feedback from users in-app (or on-website).

The most common way to integrate in-app feedback tools is as a pop-up in your app or on your website that appears on specific pages or after a user has completed a specific action. For example, you may use tools to collect feedback after a new user has signed up for an account or to collect feedback on a new feature you’ve just launched.

When to use in-app feedback tools:

When you’ve launched a new feature.

Immediately after a new user signs up.

4. Survey tools

From time to time, you may want to conduct more extensive surveys with your users — beyond quick NPS or CSAT surveys. For this, it’s best to use a dedicated survey tool rather than simply emailing your users a list of questions as these tools make it easier to collate and analyze the responses you get.

When to use survey tools:

When a customer upgrades or downgrades their account.

When a customer cancels their account.

To conduct more general product feedback.

Examples of survey tools:

5. Customer interviews

We couldn’t share examples of how to collect user feedback without mentioning customer interviews. While it’s more time-consuming than sending out an NPS survey or even putting together a broader user feedback survey, customer interviews are an invaluable source of feedback.

A 30-minute call with a customer where you can hear them speak in their own words about their experience using your SaaS product — good and bad — will provide a wealth of insights.

It’s more difficult to analyze and distill insights from an interview into actionable next steps for your team, but it’s well worth putting in the time and energy to do so.

10 actionable user feedback questions to ask

The questions you’ll ask to collect user feedback will vary depending on what you want to achieve and who you’re asking. You’ll ask different questions to a churning customer compared with someone who’s just signed up, or if you’re looking for feedback on a new feature compared with understanding how well your support team is doing.

We’ve pulled together a list of 10 user feedback questions we recommend you use to get closer to your customers and their needs. Of course, there are many more questions you could (and should) ask, but these are a great place to start:

How likely are you to recommend our product? (NPS)

How satisfied are you with our service? (CSAT)

What is your main goal for using our product?

What changed for you after you started using our product?

Where did you first hear about us?

Have you used our product before?

Why did you choose our product over other options?

What feature could we add to make our product better for you?

What feature(s) could you not live without?

Do you feel our product is worth the cost?

What to do with the feedback you receive

Collecting user feedback is just the first part of the process. You then need to analyze it and use it to improve your product, service, and company.

Here are our top five tips for understanding, managing, and acting on the feedback you get from your users.

1. Don’t treat all feedback equally

Some feedback you receive will carry more weight than others. For example, feedback from an early customer who’s been with you a long time and seen the development of your product will have more insight than from a customer who’s just started using your product.

Equally, someone who’s just switched over from a competitor will have different insights than someone who’s never used your type of product before.

When receiving feedback from your users — especially if it’s more in-depth feedback like survey responses — remember to consider the additional context around that feedback, like who they are, how long they’ve been using your product, and how closely they fit your ideal customer profile.

2. Identify common themes

Don’t look at each piece of feedback you receive in isolation, and don’t spring into action as soon as your first survey response comes in. It’s much more helpful to collect a larger amount of user feedback and then analyze it to identify common themes running throughout.

This will help you avoid common mistakes like spending hours developing a new feature that doesn’t align with how your product delivers value just because one customer mentioned it in a survey.

3. Track trends over time

Similarly, a single piece of feedback is just a single data point with no context.

For metrics like NPS or CSAT, keep an eye on how they fluctuate each month or each quarter, and especially look out for big changes. You can also use sentiment analysis to analyze the tone and general sentiment behind longer-form survey responses.

By tracking these trends over time, you’ll have a clearer picture of how the changes you make to your product are affecting your customers and how happy (or not) they are with your service.

4. Prioritize product development

You can use user feedback to help shape your product roadmap. If you’re seeing multiple requests for the same feature time after time, it might be worth prioritizing that in your product roadmap.

But when it comes to feature requests, it’s important to consider the context behind each one. A customer who’s been using your product for a couple of years will have a better feel for what they need from your product compared with someone who’s halfway through a free trial.

Equally, you should remember that just because someone requests a feature, you don’t have to build it. User feedback is useful for guiding your product roadmap and providing data to back up the decisions you make, but it shouldn’t be the only thing you rely on for planning future product development.

5. Speak your customers’ language

User feedback, interviews, and survey responses can be a gold mine for your marketing team. Interviews are especially useful in hearing how users describe your product and features in their own words.

Your marketing team can use this to sprinkle some key phrases through your website copy and marketing content to really align your messaging with the way real users talk about it. This will make your marketing feel more genuine and help build a stronger connection with potential future customers.

Collecting user feedback is just the start

Regularly collecting user feedback can make a real difference to your SaaS business. Listening to what your customers really need from your product and understanding how your product delivers value to them in real life will bring you closer to your customers, making it easier to stay customer-centric as you grow.

But collecting user feedback is just the start of the process: By analyzing it to understand common themes and identifying trends and patterns over time, you can use those insights to improve your product and business.