In this series, we’ll give you a peek behind the scenes to see how the Help Scout support team uses Help Scout ourselves!

Custom fields and tags are both handy tools for tracking, organizing, and adding context to customer conversations. You can use them to filter your search results and reports or even trigger automatic workflows. They both have their own unique strengths, so let’s take a look at some real examples in the Help Scout team’s own inbox!

When we use tags vs. custom fields

Tags are set up at the account level, so they’re great for tracking things across multiple inboxes. They’re best for info that doesn’t need to be tracked on every single conversation. For example, we only receive a few applications to our Startup Program per month, so we use the startup-program tag for that.

Custom fields are set up at the inbox level, and they’re best for info you want to track on most conversations. Unlike tags, you can even set a custom field as required, so your team needs to fill it in before replying and changing the status to “closed.” In our inbox, we have a required dropdown custom field called “Request Type” with options such as “Feature Request,” “Bug,” and “Investigation.”

Keeping things consistent

Director of support Katie Harlow points out, “It's important the whole support team is on the same page about when and how to create new tags or custom fields. We always discuss and document new tags and custom fields as a team. This helps prevent confusion from tags with duplicate meanings or even typos.”

For example, we use a standard naming convention when we create a new tag for conversations related to a status event: status-year-month-day. This helps ensure clear and consistent tagging, even during times of stress.

Using tags to prioritize conversations in the queue

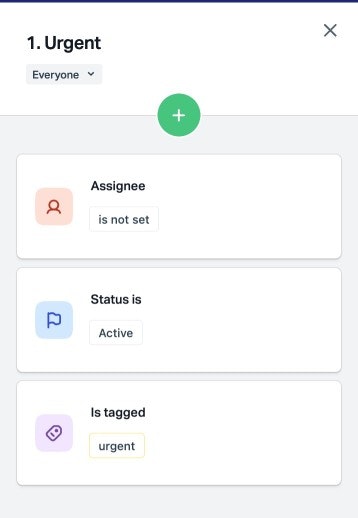

We use tags to power various views in our inbox. These help us know at a quick glance exactly which conversations need to be prioritized.

One is our urgent view, which displays a copy of conversations that have the urgent tag.

An automatic workflow automatically adds the urgent tag to conversations based on certain phrases in the body or subject. We can manually add the urgent tag to conversations as well.

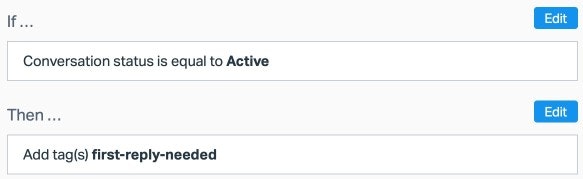

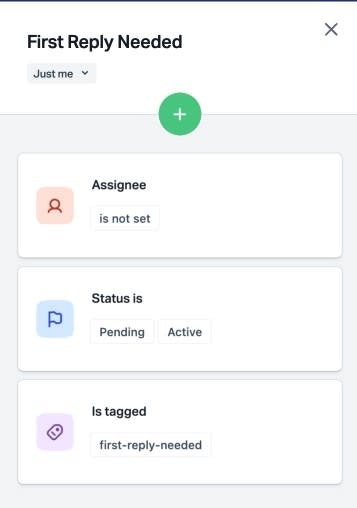

You can also use tags, workflows, and views to prioritize the metrics your team is focused on. For example, here’s how we’ve experimented with keeping an eye on conversations waiting for a first response:

First, a workflow tags all new conversations.

Then a view displays all conversations that are awaiting a first reply.

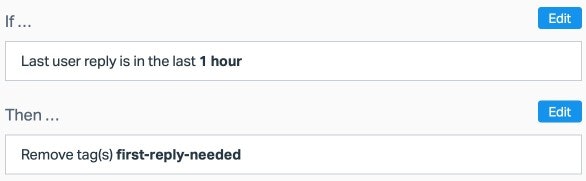

Another workflow removes the tag when someone on the team replies, and the conversation disappears from the view.

Did you know? We can export a list of your customers associated with conversations that have a specific tag! Just contact our support team.

Using custom fields and tags to understand customer trends

The All Channels Report is where we go to see an overview of trends happening in our account, including the top 50 most used tags and the percentage of conversations with each custom field.

Director of product support Elyse Mankin explains, “Categorizing your queue helps you understand both qualitatively and quantitatively what's happening for your customers as your queue volume fluctuates over time. If your queue volume suddenly spikes 25% in a given month, your support and leadership teams will likely want to understand why.

“Custom fields make it easy to quickly see which buckets of conversations are causing that spike in volume. It may be totally expected if your team is currently helping customers through a migration or your customers are celebrating a job well done on a new, exciting product release.

“Custom fields give you a starting point to understand the broader themes that are coming up for your customers. Once you understand the themes, you can start to think about how you might problem-solve, whether that means documentation updates, more customer education resources, internal training, or product changes.”

Using custom fields to drive product change

Categorizing our queue gives us concrete information to advocate for product improvements on behalf of our customers, and that helps us make smart, data-driven decisions as a company. Product manager K’Shelle Waller explains, “Custom fields are our jumping-off point for product development. We use reports to analyze which features we’re getting the most requests about based on our ‘Request Type’ and ‘Feature’ custom fields.

“For example, if I see 50% of our feature request conversations are about Workflows, I can then qualitatively go through those conversations in the inbox to get a clear sense of what exactly customers are asking for to make sure we’ve captured that in our Jira tickets, which is where we log feature requests.

“It’s an easy way to group conversations together, and it narrows the pool when searching the inbox, too. Rather than searching the phrase ‘AI Drafts,’ I can filter my search by the custom field ‘Feature: AI Drafts,’ which is more likely to give me exactly what I’m looking for.”

Did you know? You can connect custom fields directly to your Beacon contact form! Learn more here.

Using tags to improve our Docs

In addition to data-driven product decisions, reporting on trends using tags helps us identify where we can build out our customer education resources, from adding helpful instructions in Docs to planning high-impact webinars.

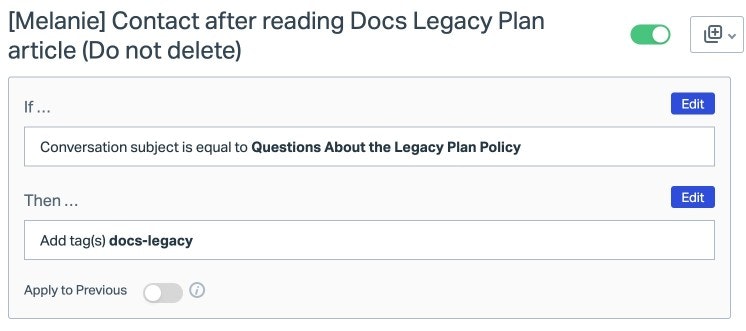

Here’s how our technical writer, Melanie Shears, uses tags to improve our Docs when we release a new feature:

We include a link in the relevant Docs article to open a Beacon so people can contact us with any questions or feedback.

We use Beacon prefill to automatically set the subject of the email to something like “Feedback about [New Feature Name].”

We create an automatic workflow that tags Beacon submissions with that exact subject.

When Melanie searches that tag, she can easily find and review all of the related conversations. Once she’s identified common points of confusion, she’s able to add clarifying details in the Docs article to make it as helpful as possible.

Ready to dive into your own tags and custom fields? Check out our Docs to get started: